The Judgment Function Is Not a Task

Anthropic just mapped which jobs AI is coming for. Production professionals should read it carefully, and then understand why the most important part of what they do does not appear on the map.

Anthropic published a labor market research paper this week that identifies, with more precision than anything previously available, which occupations are most exposed to AI displacement. [https://www.anthropic.com/research/labor-market-impacts] The methodology is rigorous. The findings are sobering. And if you coordinate complex productions for a living, your first instinct upon reading it may be something between unease and recognition.

Read it. Then read it again. And then notice what it cannot measure.

What the Research Actually Captures

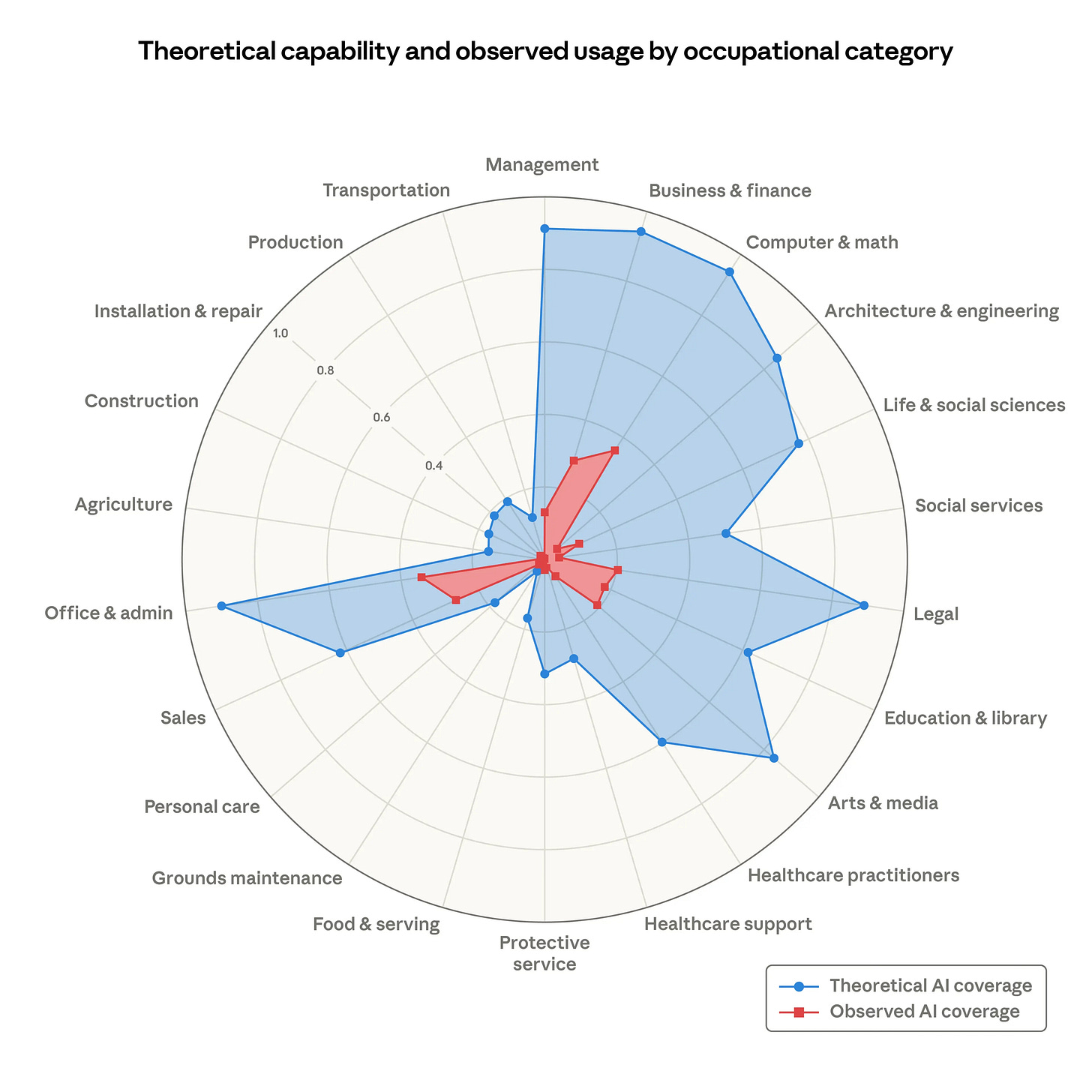

The researchers built a measure called “observed exposure” that combines what AI tools can theoretically do to a given job with what they are actually doing right now, as evidenced by real usage data. The gap between those two numbers is large. The research is explicit that the gap will close as capability advances and adoption deepens.

The knowledge work that holds a production together sits squarely in the high-exposure categories. Reading daily production reports, cross-referencing call sheets, synthesizing patterns across cost summaries and time cards: these are document analysis and pattern detection tasks. The research is correct that AI can theoretically handle them. It is also correct that AI is beginning to handle them in practice, and that the pace of that shift is accelerating.

So far, this confirms what most experienced production professionals already sense. The synthesis layer of their work is under pressure. The documents they have been reading manually, and expertly, for years can increasingly be ingested and summarized by systems that do not need to sleep.

But here is what the research cannot capture, because no occupational task taxonomy has a category for it.

The Function Above the Synthesis Layer

There is a form of judgment in production operations that does not appear in any document. It is not a task that can be listed in a job description or enumerated in an O*NET database. It is the compound interpretation of synthesized information in a context where the physical operation moves forward on its own clock, regardless of whether the analysis is complete.

A line producer reading yesterday’s DPR is not simply extracting data. She is running that data through a mental library built across dozens of productions, calibrating it against what she knows about this specific director’s tendencies, this crew’s rhythm in the first two weeks of a shoot, this location’s history of logistical friction. The 45-minute late first shot is not just a data point. It is a data point in context. And the context is not in the document. It never was.

This is the judgment function. It is not augmented by AI in any meaningful way if the AI has no access to structured operational context. A general-purpose model reading a DPR is pattern-matching against language. An experienced line producer reading the same DPR is pattern-matching against operational history. Those are not the same activity, and the gap between them is not closed by model capability alone.

The research Anthropic published measures exposure at the task level. The judgment function is not a task. It is what happens when someone with genuine operational expertise applies accumulated pattern recognition to synthesized signals in real time, in an environment where being wrong has immediate physical consequences.

That function is, if anything, becoming more valuable as the synthesis layer automates. Not less.

What Automation of the Synthesis Layer Actually Does

Here is the shift that matters and that most commentary on AI and production work misses entirely.

The experienced line producer who currently spends two hours each morning reading and cross-referencing operational documents is not spending those two hours doing her most valuable work. She is doing the prerequisite for her most valuable work. The judgment comes after the synthesis. The synthesis just happens to currently require a human, because no system has been built to do it reliably in the domain-specific way that makes the output trustworthy.

When that synthesis layer is handled by a system that does it continuously, without fatigue, across every signal simultaneously, the judgment function does not disappear. It moves earlier and operates with more complete information. The producer is no longer deciding what the documents mean after reviewing them in sequence. She is deciding what to do about a compounding risk pattern that was surfaced at 6am, before the crew call, while there is still time to intervene.

This is the change in timing that makes operational intelligence genuinely valuable to experienced professionals rather than merely threatening to them. The synthesis is not replacing the judgment. It is changing when the judgment gets applied, and therefore whether it can actually prevent the thing it is designed to prevent.

In production, prevention is everything. A problem surfaced on day eight is recoverable. The same problem surfaced on day fifteen, after it has been absorbed through creative compromise that will never appear in a budget line, is not recoverable. The movie has already gotten smaller. The schedule has already been compressed in ways that constrain what the director can achieve. The budget shows flat. The creative product does not.

Why Domain Context Is the Non-Negotiable Variable

There is a version of this story where general-purpose AI, as it continues to improve, simply absorbs the synthesis layer and delivers reliable operational intelligence without the need for purpose-built domain infrastructure. That version is worth taking seriously as a long-term possibility and rejecting as a near-term reality.

The reason is not model capability. The models are capable enough, right now, to read a production report. The reason is the calibration problem.

Not all signals are equal. A first-shot delay of 45 minutes on day two of a location-heavy drama means something different than the same delay on day twelve following three consecutive days of overtime. A department running 20% over on setup time in week one, for a director known to push setups hard at the start of a shoot and settle by week three, means something different than the same pattern in a production where no such tendency has been established. These contextual thresholds are not in any document. They are in the heads of practitioners who have seen enough productions to know the difference between normal variance and the opening signal of a cascade.

Building these thresholds into an operational intelligence system requires real production data and real expert validation. It requires the kind of pattern library that can only be assembled by working with practitioners who have the accumulated operational experience to distinguish the patterns that matter from the ones that do not.

A general-purpose model guessing at these thresholds in a high-stakes environment will produce outputs that experienced professionals correctly identify as unreliable, and then stop using. We know this from direct experience. The production professionals who tried AI tools built for other domains and found them wanting were not wrong to reject them. They were right. The tools were not calibrated for their environment.

The judgment function that experienced production professionals carry cannot be replaced by a system that lacks their operational context. But it can be supported by a system that has been built with that context deliberately, with their participation, and validated against real operational outcomes.

The Professionals Who Will Use This Well

The Anthropic research identifies a real exposure for the synthesis-layer tasks that occupy a significant portion of production knowledge workers’ days. It does not identify any exposure for the compound judgment function that experienced professionals bring to the interpretation of those synthesized signals.

The professionals who will use operational intelligence well are exactly the ones whose judgment is most developed. The line producer with twenty years of credits is not at risk of being replaced by a system that reads DPRs. She is the person best positioned to use a system that reads every DPR simultaneously, surfaces the patterns that match validated risk indicators, and delivers that synthesis to her in time to act on it.

Her judgment does not become less valuable when the synthesis layer is automated. It becomes the scarce resource that determines whether the system’s outputs get translated into good decisions or ignored. The system is only as useful as the person interpreting its signals. And the person best equipped to interpret them is the one who has been doing this work long enough to have the internal pattern library that tells her when the system is right and when it is surfacing something that context explains away.

This is the professional the tool is built for. Not the coordinator who needs to learn what a DPR means. The producer who has read ten thousand of them and knows, before anyone says a word, which signal in this morning’s report deserves a phone call before the crew rolls.

What operational intelligence gives her is more time to make that call.

---

John Corser is the CPO and Co-Founder of [filmIQ.ai](https://filmiq.ai), a production intelligence platform for film and television. He is the former SVP of Production and Production Technology at NBCUniversal and a Daytime Emmy Award winner for Innovation.

Judgment comes from knowledge, context and experience. 2 of those things are hard for AI to be systemically good at.